Fine-tuning Mistral for an enhanced content search experience (part II)

A backend perspective

Legacy article

This post reflects an earlier stage of Empathy Platform development. Some of the tools, integrations, or approaches described here are no longer in use in our current stack.

Since then, our focus has evolved towards self-hosted, private, and sustainable AI infrastructure, where compute is treated as part of the product itself. All AI compute is currently run on Empathy’s own GPU environment, hosted in our net-zero energy, bioclimatic private cloud in Asturias.

Rather than updating this article to fit our current approach, we’ve chosen to preserve it as a record of our R&D history and innovation journey.

For our current approach and latest developments, explore our main blog section.

This is the second post in a four-part series focusing specifically on the backend perspective. The first post provided an overview, and subsequent posts will dive deep into the infrastructure and UX perspectives.

As we said in the previous post, we’re always striving to push the boundaries of innovation, especially in search experiences. For this series, we’ll focus specifically on how we improved our developer portal search capabilities.

This post details our backend journey, highlighting the technical processes and challenges overcome in fine-tuning Mistral for our platform. Let’s then share some insights!

The backend journey

The backend team embarked on this project with the intention of creating a comprehensive and neutral dataset for the Mistral model. To achieve this, three microteams were formed, each tasked with generating diverse data sources.

Backend development process: 1. Datasets generation using diverse indexing methods. 2. Unify the datasets in one. 3. Fine-tune the Mistral model with the combined dataset based on the Empathy Platform official documentation. 4. Deploy the model in the EPDocs portal.

Diverse data sources

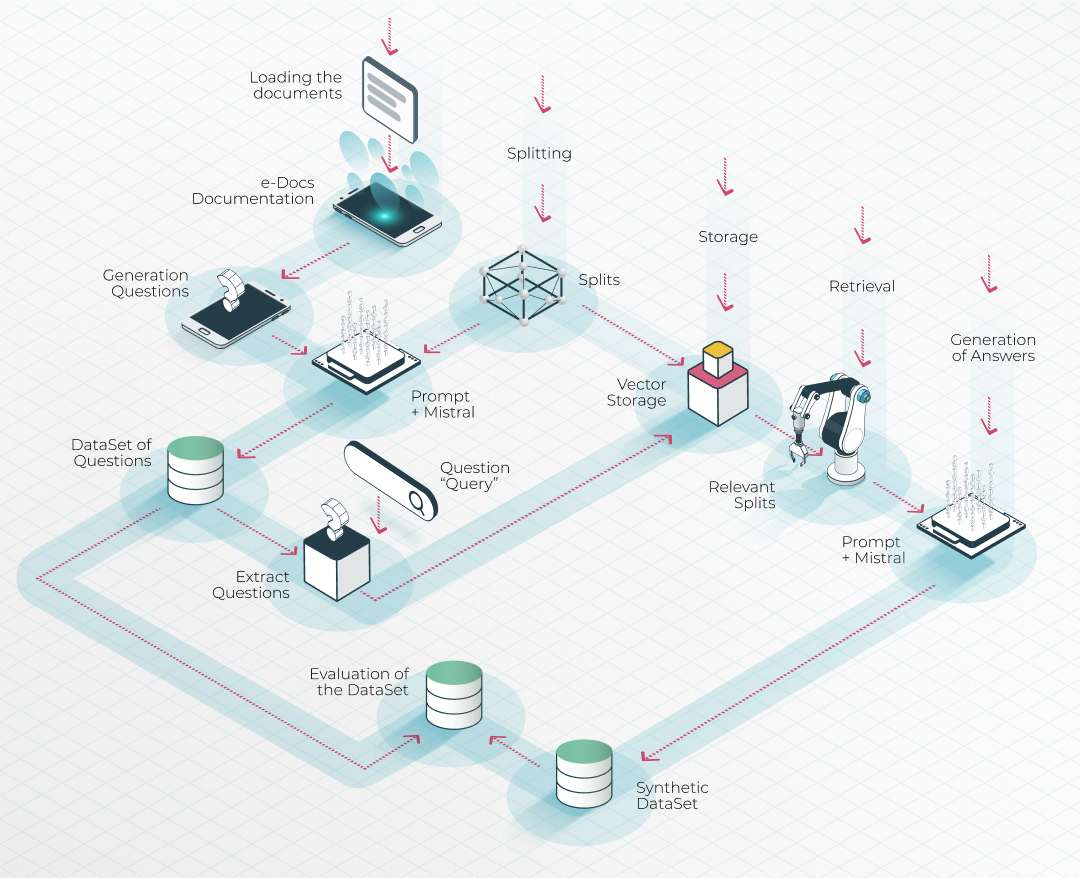

- Team one & team two used an in-house Retrieval Augmented Generation (RAG) system to generate datasets locally based on the Empathy Platform documentation.

- Team three used a Python library with Mistral to generate datasets of questions.

This multi-source approach ensured a well-rounded dataset for the model.

Data processing with RAG: indexing and question generation

For the RAG dataset generation approach, the documentation in the Empathy Platform portal was split into embeddings that were stored in a vector database. This was followed by generating questions synthetically, allowing the system to infer and generate accurate answers in combination with the Mistral model.

Unifying the datasets

Once the datasets from the three teams were prepared, they were standardized into a common format, creating a robust combined dataset. This step was crucial for effectively fine-tuning the Mistral model.

Fine-tuning and deployment

With the unified dataset ready, the next step was fine-tuning the Mistral model. This involved a custom script and workflow to tailor the model for our Empathy Platform documentation. The fine-tuned model was then deployed on the EPDocs documentation portal, ready for people's interactions.

Final thoughts on backend innovations

The backend work on fine-tuning Mistral was essential in enhancing the search functionality of our developer portal. By leveraging diverse data sources, advanced AI models, and a unified dataset approach, the backend teams finally manage to create a content search experience that is both powerful and secure.

Building search, differently

What started as early experimentation has evolved into a more integrated way of building AI search. Today, our work is centered around Empathy.AI, the space where we design and develop AI search systems grounded in self-hosted, private, and sustainable infrastructure.

By treating compute as part of the product itself, we gain greater control, efficiency, and long-term scalability.

Want to explore how this approach shapes what we build today? Discover more about Empathy.AI (opens new window) or dive into our latest articles.